Heights and depths of a binary tree

Heights and depths of a binary tree

Height and depth variations based on insertion order

Overstuffing a node upon insert(17)

Moving 17 from a leaf node to its parent

Splitting the children of an overstuffed node

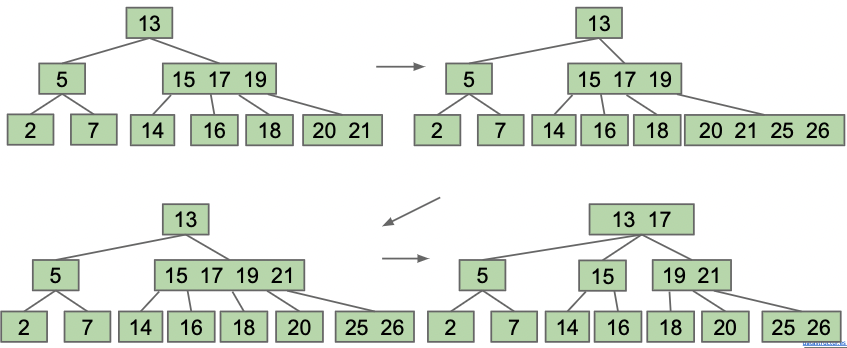

Adding 25 and 26 causes multiple node splittings

Root has four items

Splitting the root